As generative AI becomes more deeply integrated across organizations, strong guardrails are critical. They help reduce risks, ensure ethical use, and maintain trust—while still allowing innovation to move forward.

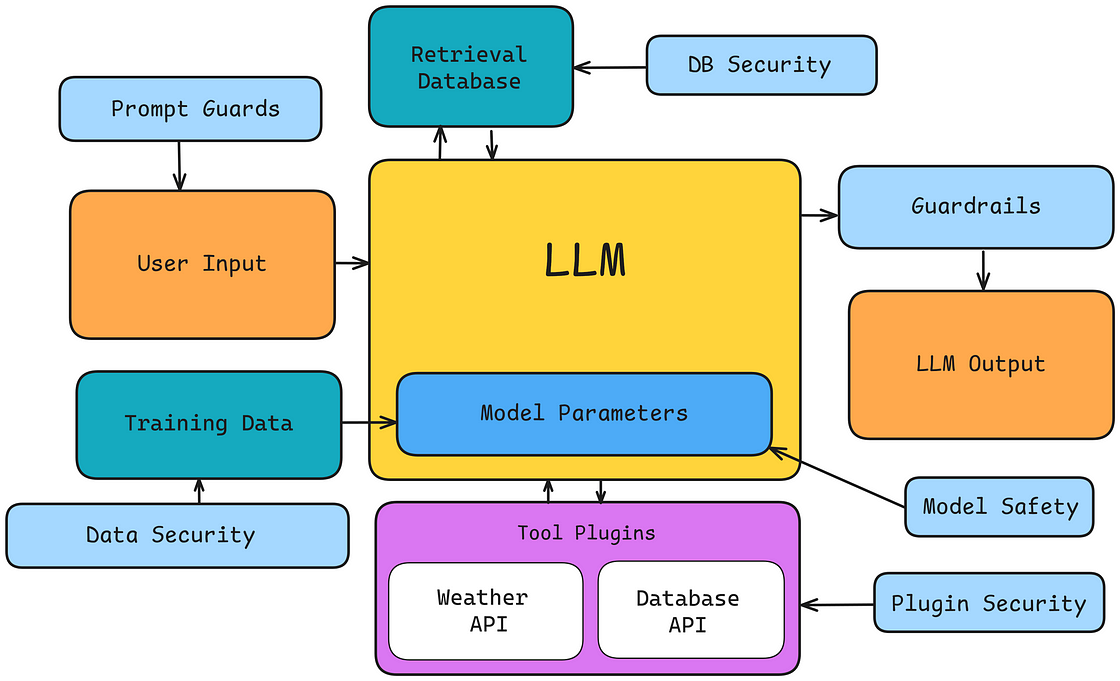

To address this, JPMorganChase introduced a guardrail system called Fence. This framework takes a data-driven approach to identifying, testing, and reducing vulnerabilities in large language models (LLMs). It targets common risks like hallucinations, topic drift, and prompt injection, applying safeguards tailored to specific use cases to improve both security and reliability.

One of Fence’s key advantages is its flexibility. By using synthetic data generation, it can create customized guardrails suited to different teams, workflows, and client needs. This adaptability makes it a scalable solution with potential applications across the broader industry.

From basic question-answering tools to more advanced search capabilities, Fence is already improving how employees and customers interact with AI systems. Internal performance benchmarks suggest it delivers higher levels of safety and reliability compared to many existing solutions.

As AI technology continues to evolve, ongoing innovation in guardrail frameworks like Fence will be essential—especially in highly regulated sectors like financial services, where trust and compliance are paramount.